Core Architecture and Reliability Comparison Between ZFS and Btrfs: Technical Features and Real-World Deployment Considerations

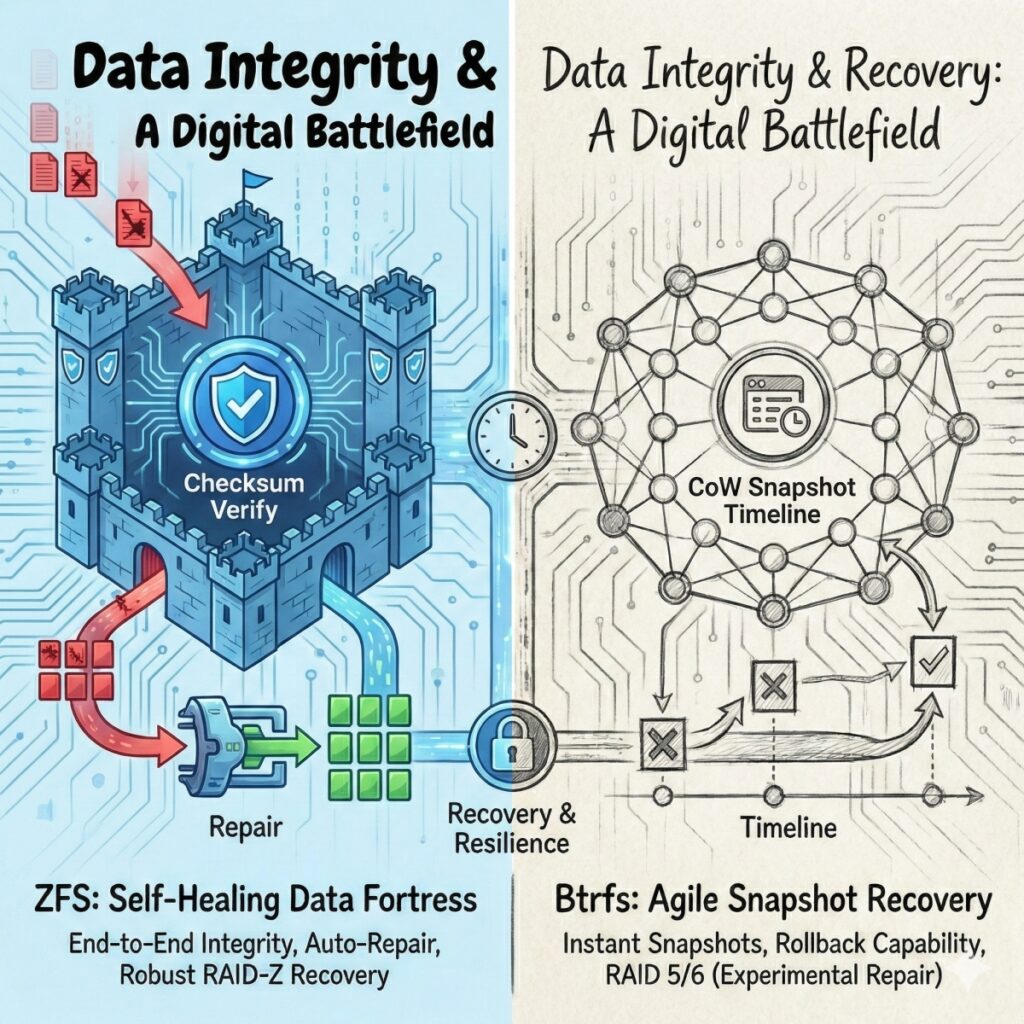

In the battleground of modern storage technologies, Btrfs and ZFS / OpenZFS undoubtedly stand as two of the most dominant powerhouses. Although both are built upon the core philosophy of Copy-on-Write (CoW) and offer self-healing and snapshot capabilities, they exhibit distinctly different engineering DNA when dealing with the physical storage layer (e.g., RAID5/RAID50) and device expansion.

Let us examine the differences between these two file systems across four major dimensions: write mechanisms, cost of expansion, the RAID write hole, and data integrity.

1. Philosophical Differences in Write Mechanisms: Flexibility vs. Efficiency

Btrfs and ZFS adopt fundamentally different strategies when handling data writes, and this distinction directly determines their respective use cases.

Btrfs: Tetris-like Filling

Btrfs abstracts physical devices into 1 GiB logical units known as “chunks”. This design allows it to dynamically determine the stripe width at write time based on the number of currently available disks.

The key advantage is exceptional hardware flexibility. Within a single storage pool, you can mix drives of different capacities, and Btrfs will maximize space utilization like playing Tetris.

Architecturally, it lacks a standalone RAID50 profile; instead, it aggregates multiple RAID5 chunks, effectively functioning as a parallel RAID50.

ZFS: Transaction-Based Performance Logic

ZFS, by contrast, follows a performance-first strategy. It adopts a variable stripe width approach and dynamically determines the stripe layout for each write transaction.

The advantage of ZFS lies in its complete elimination of the traditional RAID Read-Modify-Write (RMW) cycle, significantly improving I/O efficiency. ZFS supports full-stripe writes. It calculates parity in real time based on the size of the data and performs full-stripe writes, ensuring that parity does not cross data boundaries.

If your environment is filled with various spare old drives, the architecture of Btrfs is well suited for mixing drives of different ages and capacities. However, if you pursue peak I/O throughput and enterprise-grade consistency, the transaction flow architecture of ZFS holds a clear advantage.

2. The Cost of Expansion: Rebalancing vs. Dynamic Data Flow

When storage capacity runs low and a new disk is added, what happens? This is one of the most commonly misunderstood aspects among operations teams.

The Cost of a Full Rewrite in Btrfs

To utilize the new disk and achieve a wider stripe width, Btrfs must perform the btrfs balance operation. The impact is a full rewrite process. For arrays of several terabytes, this process may take several days and generate substantial I/O load.

As a result, only after the lengthy rewrite process is completed will the data distribution and RAID level be fully converted.

The Elegance and Pitfalls of ZFS: Logical Reflow

ZFS does not require moving existing data during expansion. This behavior is referred to as “no forced rewrite.” Under this mechanism, the ZFS Storage Pool Allocator (SPA) monitors free capacity and prioritizes directing new writes to (new) devices with more free space. This is a form of capacity-oriented weighted allocation.

However, there is a common misconception about expansion: many people mistakenly believe that the data will automatically be rebalanced after expansion. This is incorrect. Existing data remains on the original disks, resulting in read hotspots. The IOPS of newly added disks provide limited benefit when accessing that existing data.

In such cases, a better solution is to achieve true balance by manually performing a ZFS rewrite or by using the new RAIDZ Expansion technology. However, it should be noted that if snapshots exist, this may result in a doubling of space usage.

In the latest versions of OpenZFS, there are already features such as zpool remove and other device modification capabilities, along with tools that can be used for rebalancing. Put simply, the ZFS expansion model differs from Btrfs’s dynamic expansion, but it is not entirely static.

In practical use, once the capacity has been determined, configuring it accordingly and avoiding further expansion is a more suitable choice. Enterprises should estimate the required specifications in advance and purchase accordingly to avoid future complications. However, this is also a drawback of ZFS, as the flexibility of expanding an entire RAID set is not as good as that of Btrfs.

3. The Core Battleground of Data Integrity: RAID Write Hole

The “write hole” refers to a critical risk that occurs when a power failure happens during the write process, potentially resulting in inconsistency between data and parity.

ZFS: Structural Immunity

ZFS inherently prevents this issue at the architectural level by adopting several technologies. The first is atomic updates: ZFS uses Transaction Groups (TXG) and a ring buffer. The system scans the ring buffer to locate the latest TXG with a “valid checksum”. Incomplete TXGs—those being written at the time of a power failure—are ignored outright, and the system immediately returns to the last consistent state.

The second is the Merkle Tree. The entire file system is structured as a massive hash tree, where any corruption of underlying data causes upper-level checksum validation to fail, thereby preventing silent data corruption. The Uberblock serves as the sole trust anchor for this tree and is never overwritten in place.

Btrfs: From Experimental Status to Redemption Through RST

Btrfs RAID5/6 had long been labeled as “experimental,” precisely because of the fatal write hole vulnerability.

With Linux kernel 6.2, the RAID Stripe Tree (RST) was introduced. It is a new tree structure that manages physical layout on a per-extent basis and was originally designed to address the write hole vulnerability. Prior to this, Btrfs relied on the full read-modify-write (RMW) cycle to verify checksums, resulting in performance overhead.

However, according to the official btrfs readthedocs Status page (as of Linux kernel 6.17), most Btrfs features are marked as OK or Mostly OK. RAID5/6 remains unsuitable for production use and is not marked as OK in the status table. As of Linux kernel 6.12, experimental features require CONFIG_BTRFS_EXPERIMENTAL to be enabled, and known issues remain. Furthermore, the underlying causes of the write hole have not been completely eliminated. The current implementation mitigates certain write corruption scenarios, but the long-term effects still require further observation.

In current mainstream Linux kernels (e.g., the 6.x series), native RAID5/6 support in Btrfs has shown gradual improvement. Over the past few years, the Btrfs community and developers have patched known bugs in the RAID5/6 implementation, including fixes for race conditions and improvements to scrub behavior. However, the core RAID5/6 implementation has not yet reached full maturity or stability. In certain edge cases—such as data recovery following device failure—issues with reliable recovery may still occur.

In other words, although the handling of RAID5/6 in modern kernels has become more mature than in the past, it still falls short of the robustness offered by RAID1/10 or mature implementations such as RAID-Z in ZFS. In technical discussions, therefore, it remains advisable to use more stable RAID configurations—such as RAID1/10, or RAID5/6 built with mdadm and layered with Btrfs on top—as alternatives, rather than relying directly on Btrfs’s native RAID5/6 implementation in critical production environments.

In practice, it has been found that although Linux kernel 6.2 and later improves certain aspects of Btrfs’s write and checksum handling, the write hole still persists—particularly in corner cases involving power loss and device failure. At the same time, scrub and balance operations can still be slow. On large-capacity arrays, they may take days or even weeks to complete.

4. Risks Under Snapshots: Hidden Concerns in Btrfs

Snapshots are a killer feature of CoW file systems, but during array expansion or reorganization, snapshots may become a burden. In ZFS, datasets and snapshots are formally separated entities. In contrast, Btrfs subvolumes and snapshots maintain the flexibility of sharing the same internal namespace.

The risk with Btrfs lies in performing a balance operation while holding a large number of snapshots, as updating extents referenced by those snapshots can trigger a delayed refs explosion. This may cause performance to drop to zero, and there have even been catastrophic incidents where metadata verification failures prevented the file system from being mounted. However, many of these issues have been mitigated in newer Btrfs release and recent Linux kernel versions.

ZFS’s resilience is reflected in the fact that its block pointers are immutable. Reflow only adjusts the physical layout at the RAID layer and is independent of file system–level features such as snapshots. Even with thousands of snapshots, expanding the array will not threaten metadata integrity or cause a performance collapse.

How Should Enterprise and Home Users Choose?

When evaluating the suitability of Btrfs in enterprise environments, the key consideration is not whether it offers advanced features, but whether it is suitable to serve as the storage foundation capable of withstanding worst-case scenarios. From an engineering perspective, Btrfs is already quite mature in RAID1, RAID10, or single-disk and multi-copy configurations, and it is widely used for system disks, image management, and snapshot rollback scenarios in Linux distributions.

However, when storage requirements move toward the capacity efficiency and fault-tolerance levels most valued by enterprises (e.g., RAID5/6), the risk model of Btrfs may not fully satisfy enterprise expectations for predictable failure modes and data recovery reliability. Although Btrfs RAID5/6 has gradually addressed certain known issues in the current mainstream Linux kernel 6.x series, its implementation is still explicitly marked as not recommended for critical production environments. This leads some enterprises to consider whether they are willing to take on additional uncertainty in incident handling, liability delineation, and long-term operations and maintenance.

Overall, Btrfs is not “unsuitable for enterprise use,” but is better positioned at the system layer, the platform layer, or within storage architectures that provide upper-layer protection. By contrast, for scenarios that carry core enterprise data, require years of stable operation, and heavily depend on the capacity efficiency of RAID5/6, solutions with stronger engineering maturity and risk controllability should still be prioritized.

By comparison, ZFS has a more clearly defined role in enterprise environments. Its core design assumes that the system must carry critical data over the long term and must still maintain data consistency and recoverability under extreme conditions such as hardware failure, power anomalies, or simultaneous multi-disk failures.

ZFS integrates the file system and disk management into a single storage stack. Through end-to-end checksums, Copy-on-Write, and the tight coupling of RAID-Z, it establishes predictable failure modes and mature self-healing processes, which are precisely the engineering characteristics most valued by enterprises.

Therefore, ZFS is particularly well suited for mission-critical enterprise storage, virtualization backends (e.g., VMs and container volumes), large-scale NAS deployments, and backup repositories. This is especially true in environments where parity-based architectures such as RAID5/6 are required to balance capacity efficiency with data integrity, as ZFS delivers superior stability and more predictable failure behavior than most general-purpose file systems.

Of course, ZFS comes at the cost of greater hardware demands, higher overall costs, and a more stringent expansion model. However, at the enterprise scale, this design philosophy of trading resources for predictability is often more acceptable to organizations concerned with long-term operations, regulatory compliance, and risk management.

Both File Systems Have Their Own Strengths

When integrating the RAID-Z model, ZFS provides end-to-end checksumming and self-healing mechanisms, whereas Btrfs relies primarily on RAID configuration and scrub operations for verification. However, Btrfs still carries distinct limitations and risks in metadata and RAID5/6 scenarios.

ZFS has relatively high RAM requirements, particularly when deduplication is enabled. This must be carefully considered during deployment, as it can increase overall system costs, especially in the era when memory pricing is high, making it a significant cost factor. In return, it also delivers better performance and stronger data integrity.

Here is a comparison of the main differences between the two file systems:

| File System | Btrfs | ZFS |

| Hardware Flexibility | Supports mixing disks | More restrictive (VDEV constraints) |

| Expansion Impact | High (requires full rewrite via balance) | Low (reflow, no rewrite required, but read hotspots must be monitored) |

| Write Hole Protection | Depends on Linux kernel version (6.2+ RST) | Architectural immunity (Atomic TXG) |

| Snapshot Expansion Stability | Risk (Delayed Refs Explosion) | Safe (Immutable BP) |

For large-scale environments where “data protection” and “long-term reliability” are non-negotialbe priorities, OpenZFS is the definitive choice. Its stability, along with the Merkle Tree and atomic updates, provides mathematically verifiable integrity.

However, for experienced Linux user who requires flexible disk management — for example, mixing old drives of varying capacities in a home lab — and who are comfortable managing kernel version compatibility and operational details, Btrfs offers substantial flexibility.

Please remember that regardless of which one you choose, SMR drives should never be used in a NAS environment. Whenever possible, always specify CMR drives. This is because SMR drives exhibit relatively poor random write performance, making them unsuitable for frequent write operations. HDD manufacturers, including Western Digital and Seagate Technology, are advancing next-generation technologies such as Microwave-Assisted Magnetic Recording (MAMR) and Heat-Assisted Magnetic Recording (HAMR) to break through capacity bottlenecks, and new drive models will also adopt these technologies.

Reposted with permission from CyberQ